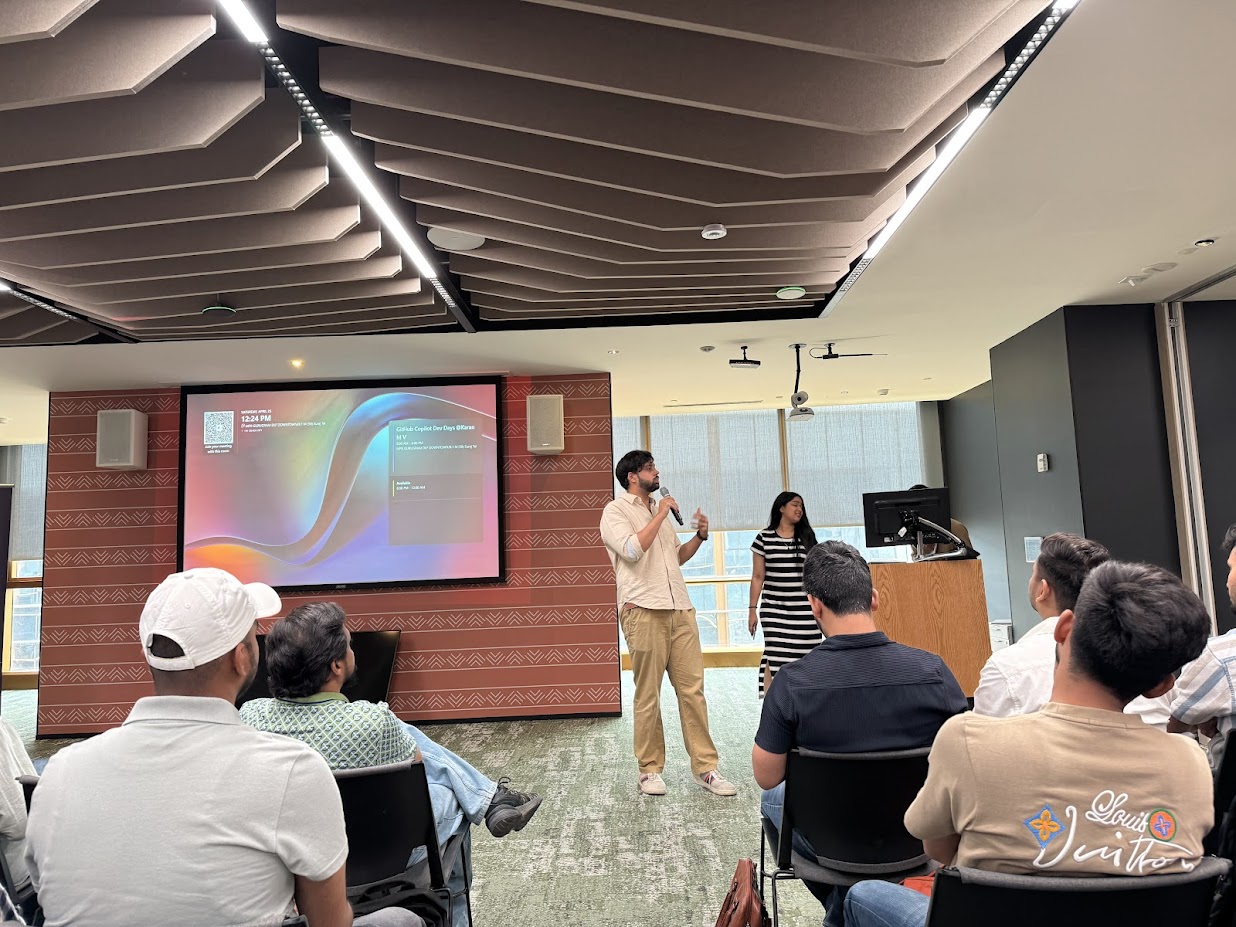

I attended GitHub Copilot Dev Days | Delhi-NCR (GitTogether) recently, and it became one of those rare events that does more than give you a few notes for later. It changes the shape of the questions you carry back home.

A big thank you to Vipul Gupta and Chhavi Garg for putting the room together. Chhavi set the tone right from the opening: interactive, energetic, and genuinely good at keeping people awake in the best possible way.

Also, the Microsoft IPL song between sessions was not on my bingo card. Somehow, it worked.

The part that felt closest to production

Ravindra Verma gave the kind of talk that immediately pulls you out of demo-land.

AI coding agents look magical when the task is clean, the repo is small, and the outcome is already half-implied by the prompt. Production is not that polite. Production has 2 AM Slack messages. Production has permissions. Production has the possibility of someone, or something, running a command that confidently does exactly the wrong thing.

That was the part that stayed with me: not fear of AI agents, but respect for the blast radius.

The practical lesson was simple and sharp:

- Keep humans in the loop.

- Give agents restricted access.

- For dangerous actions, start with no access at all.

- Let trust grow incrementally.

That framing landed. We do not hand a junior engineer root access on day one because they are enthusiastic. We should not hand an AI agent the keys because it completed three impressive tasks in a row.

Guardrails are not the opposite of creativity

Vipul had a clean way of breaking down ideas without making them feel smaller. My favorite part was his point on AI creativity.

At first, it feels natural to think constraining an AI means telling it exactly what to do. But the more useful move is often telling it what not to do.

Give it guardrails, not a script.

That sounds counterintuitive until you sit with it. A script makes the model a typist. Guardrails make it a collaborator inside a safe boundary. You keep the creative thinking alive while still protecting the parts of the system that matter.

That is a better mental model for how I want to use AI in my own workflow too. Not as a chaos engine, not as a replacement for taste, but as something useful when the boundary is clear.

Semantic search finally clicked differently

Akshat Sharma ran a session on semantic search that was a genuine knowledge add for me.

I walked away rethinking retrieval from the ground up. That is usually the sign of a good talk: it changes how you see something you thought you already understood.

The example that stuck was Yahoo vs Google. It was not only that Google had better technology in a narrow technical sense. Google understood intent behind a query. It got closer to what the user meant, even when the user was not fully sure how to say it.

That is semantic search in one clean sentence: understanding meaning, not just matching words.

Once you see search that way, you start seeing the same pattern everywhere. Docs, support bots, internal knowledge bases, product discovery, AI copilots, even how we ask questions in a team. The real value is often hiding in the gap between what someone typed and what they meant.

The quiet conversation beside the stage

Then there was Rudrank Riyam.

He was sitting right beside me, sipping coffee, quietly coding something. I gathered my courage and started a conversation. It turned out he had built an entire pipeline that could take an app from chat to App Store publishing, and that work got acquired.

That was one of the most memorable moments of the day for me, partly because it was not on the stage.

Events are easy to measure by sessions, speakers, and slides. But sometimes the most remarkable person in the room is just beside you, doing their thing without announcement. You only find out if you say hello.

That moment reminded me why showing up matters.

Noticing my own shift

A few years ago, I was mostly on the absorbing side of rooms like this. I would try to catch every idea from people who seemed much further ahead, then go home and slowly unpack what I had missed.

This time felt different.

I still learned a lot from senior engineers talking about AI adoption, automation, and workflow design. But I also found myself explaining work culture and the industry to college students who were just starting out.

That shift hit differently when I noticed it.

I am still early in my developer journey. Very early, honestly. But there is a strange moment when you realize you are no longer only receiving context. You have started carrying some context for others too.

That does not make you finished. It just means the ladder has more than one direction. You look up and learn. You look sideways and connect. You look back and help someone climb the first few steps with a little less confusion.

What I carried back

The event left me with three thoughts I am still turning over.

First, AI agents need engineering discipline more than hype. Permissions, review loops, and blast-radius thinking are not boring details. They are the difference between a helpful assistant and a very expensive mistake.

Second, good constraints are creative infrastructure. The best AI workflows will probably come from people who can define the boundaries well, not from people who only write longer prompts.

Third, developer community still matters. You can watch talks online, and you should. But being in the room adds a different kind of signal. You hear the side comments. You meet the person next to you. You feel where the industry is nervous, excited, and quietly changing.

I left equal parts overwhelmed and excited. The pace is relentless. The possibilities are absurd. And rooms like this make me feel like I am exactly where I should be.

Grateful for rooms like this. See you at the next one.